In the absence of grade boundaries, yet still being under pressure to complete data sheets about students, there are several courses of action that teachers have taken:

- Make up grade boundaries (using some sort of logic)

- Create grade descriptors for 1-9 using some of the information we have been given

- Just make it all up

Although I have pondered grade boundaries, as I don’t use marks with my GCSE students there is no need for me to come up with % or raw mark grade boundaries. I have also avoided number 2 as it can be complex and rely on complex and often ambiguous language. In an attempt to avoid number 3, I have trialled using student comparison to help with our data entry.

I’m currently trialling ‘No more marking’ so the idea of comparison was in my head when we did this yesterday.

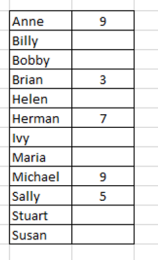

We took a list of our students. Here is a made up class to help me explain:

Throughout this process, I had in my mind two sets of information about grades and levels of knowledge & skills; Ofqual’s Religious Studies GCSE grade descriptors (2/5/8) and our exam board mark scheme (in our case AQA GCSE)

We initially identified the student/s that we believe could get full marks and have assumed that full marks will be a grade 9. We then identified the student that has shown the least aptitude with the course content and skills and allocated them with a 3. We believed that he could do slightly more than the grade 2 descriptor. We then identified a student that we thought would be a grade 5 and 7 using as far as possible, logical increments of knowledge and skills.

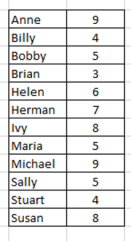

I then posed a set of questions to my colleague about the remaining students. I also had student data in front of me so I knew roughly where to start the questioning. Here is an example of the type of conversation we had:

Me: Billy’s data suggests he should be 4-6. Is he ‘stronger’ or ‘weaker’ than Sally?

Colleague: He’s really good with responses in class but in his written work has lacked detail that Sally has, so weaker.

Me: Is he stronger than Brian?

Colleague: Definitely. He can give examples in his work that Brian can’t.

The conversations were actually more detailed than this as we could both discuss specific knowledge and skills that we know they have to have. Whilst, I know this class to some extent, the discussion with my colleague meant that we could consider carefully what they can/can’t do. I can’t vouch for my colleague, but I know if someone did this with me it would certainly help to clarify what it is that each student needs to do to progress further as we discussed in terms of knowledge and skills, not in terms of how many more marks they need to achieve.

In all cases we have taken a holistic view of the student; classroom responses, accuracy of answers, test capability etc. It is not just from one test with marks and grade boundaries.

So we finally ended up with a set of class data, that was essentially a ‘rank’ of the class, using grade descriptors/mark schemes. I know that the term ‘ranking’ of a class can be controversial but this is in no way is shared with students or used with them. It had the outcome of generating a grade that we needed to enter in our data system but hopefully more usefully for my colleague we had a good discussion about the individual students and their strengths and areas that we can help them on in the coming months.

I’m not claiming that this is any better than the suggestions at the start of the blog but it certainly is another way, instead of using randomly made up grade boundaries. It also encourages a teacher has to know their class well (not just what marks they get) and helps to diagnose potential future support for a student.

Pingback: It’s time to get rid of marks, grades and levels….no, really this time. | missdcoxblog

Pingback: Blog collection: Generating 2020 GCSE/A level grades | missdcoxblog