One reason that I’ve been interested in education research in the past couple of years is because, by luck/coincidence it mainly supports what I already do. I’ve been setting quick 1-5 recap quizzes for years, well before I read cognitive science research on the positive impact of retrieval practice. I’ve not really done much to change how I teach yet it ‘works’ with the research.

However, it’s not always as simple as confirming what you already do. Research in education is a minefield. Might it be better to leave well alone?

Here is a (not comprehensive) summary of why using research is problematical:

1.Not enough specific research has been done for context specific conclusions

In the EEF ‘A marked improvement’ publication on marking, the authors make the following comment, very early on:

‘The quality of existing evidence focused specifically on written marking is low. This is surprising and concerning bearing in mind the importance of feedback to pupils’ progress and the time in a teacher’s day taken up by marking. Few large-scale, robust studies, such as randomised controlled trials, have looked at marking. Most studies that have been conducted are small in scale and/or based in the fields of higher education or English as a foreign language (EFL), meaning that it is often challenging to translate findings into a primary or secondary school context or to other subjects. Most studies consider impact over a short period, with very few identifying evidence on long-term outcomes.’ p5

This is true for many areas of teaching yet teachers and schools are being encouraged to use research more.

If I work in a coastal school, with majority white, FSM boys, will any research be directly applicable to my context?

Is it just a waste of time with the current lack of useful and applicable research?

- It can go against our instincts

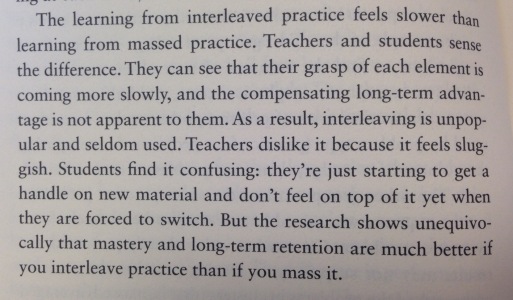

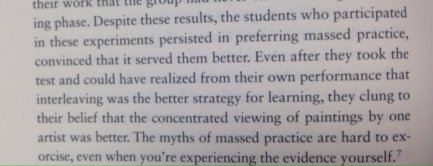

In ‘Make it stick’, The Science of Successful Learning by Peter C. Brown, Henry L. Roediger III and Mark A. McDaniel, they make a point about interleaved practice and teacher discomfort:

Teachers prefer for themselves and their students to feel comfortable as they learn. Clearly some research informed practice won’t always ‘feel’ comfortable.

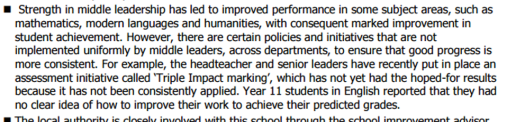

3.Ofsted may not agree

Ofsted have previously commented on what they see and think ‘works’, not always what research may suggest is the case. There are examples in Ofsted reports that make a statement on something that a school is doing that may not correlate with research. In recent years, as research has become more prominent, comments in some cases have declined especially in cases where practice has been ‘debunked’ and Ofsted have attempted to make it what they are aren’t looking for when inspecting. Should inspectors continue to make judgements from their collective or personal view or would it mean that reports would be impossible to write?

And perhaps more controversially, should an Ofsted team comment on a school that is actively going against what research points to as being effective?

Is the issue here consistency or that research may not support it?

4.It’s not always convenient for leaders

One reason why so many schools have been reluctant to ditch grading lesson observations (even though evidence shows they’re unreliable to consistently make valid judgements) is not because they think grading is a valuable tool for teachers but a perfect system for a spreadsheet.

5.It doesn’t support a school’s priorities

Schools that want to prioritise English and Maths in the curriculum will not be interested in research that suggests that the Arts or Philosophy might help learning, even when there might be evidence that they can benefit English & Maths. The political gaming of league tables will always mean that leaders want what seems to be more direct action such as giving more curriculum time to core subjects.

- It’s not the way we’ve always done it

Teachers can be habitual. We like to teach in a way that we know, even if it isn’t hugely successful; we are reluctant to change. It’s comfortable to stick with the way we’ve always done things.

Who cares if research has a better way?

7.We can cotton pick what we want…

The EEF toolkit rates ‘peer tutoring’ as having a positive possible effect. I could see this and tell my staff ‘I want to see ‘peer tutoring’ in all your classes because that will enhance learning by ‘+5’ months.

However, the evidence behind this summary wouldn’t support this action. It specifies that the tutoring is most effective with cross-age tutoring, with two years between the students. That wouldn’t be the case in one class in the UK.

And crucially it also states:

‘Peer tutoring appears to be less effective when the approach replaces normal teaching, rather than supplementing or enhancing it, suggesting that peer tutoring is most effectively used to consolidate learning, rather than to introduce new material.’

Research in the wrong hands and with superficial or no in-depth analysis can be dangerous….

8…And ignore what we don’t like

I don’t like teaching using group work. I’ll ignore any research about its possible learning benefits.

9.Research suggests…..

Even in the EEF toolkit each intervention has a list of caveats. Nothing is simple. Looking at the effect sizes is one way but even that is full of complications. All we can ever say is that ‘Research suggests….’ and always present it with the scepticism that it deserves.

Should we be directing teachers how to teach using research that can, at best, make suggestions of what might work?

- Sources contradict each other

Read any literature review and you will almost certainly find a variety of previous papers that have different and often contradictory findings. Interpretation bias can also make a difference. You can pretty much use research to prove or disprove your point if you want to.

As a leader, would you reduce class sizes or not?

- EEF Toolkit & Hattie’s effect sizes (0.4 is seen as the point where something ‘makes a difference’)

11.It’s not what we want it to be

Research on classroom displays suggests that, especially for younger children, too much stimulation can hinder learning yet in primary schools across the country teachers spend hours on colourful bordettes, elaborate scenes and ‘welcoming’ boards.

Whilst there is research that some targeted displays can actually accelerate progress they may not be the wonderfully creative and attention grabbing scenes that some teachers love to construct.

Sources & references

- http://visible-learning.org/nvd3/visualize/hattie-ranking-interactive-2009-2011-2015.html

- https://educationendowmentfoundation.org.uk/resources/teaching-learning-toolkit

- http://www.evidencebasedteaching.org.au/hattie-effect-size-2016-update/

- http://askforevidence.org/articles/neuromyths-and-why-they-persist-in-the-classroom

Pingback: Educational Reader’s Digest | Friday 7th April – Friday 14th April – Douglas Wise

Reblogged this on The Echo Chamber.

I agree with your conclusions, but it is worse than you state. The ‘evidence’ that people like the EEF or John Hattie tout is not applicable to classrooms at all – the measures they use aren’t measures of educational impact, but of research clarity and it becoming increasingly clear that we should ignore these league tables and ‘effect sizes’. I came across a paper by two of the big people in meta-analysis, Cheung and Slavin (https://tinyurl.com/h7wr6zc) which notes the relationship between methods used in research and ‘effect sizes’, independent of what kind of educational impact the intervention might have. It suggests that you really can’t compare between different studies with different methods.

Simpson (https://tinyurl.com/zhzbnwx) goes further and takes the whole enterprise of ‘effect size’ to task. For example, he uses ‘feedback’ as an exemplar of how you can’t compare studies: a lot of the feedback studies in the EEF data find the effect size of feedback at the level of ‘correct/incorrect’ against a control group with no feedback, while some others are comparing formative feedback with summative feedback etc. The same for homework: some are homework vs no homework and some are homework vs alternative homework.

Cheung and Slavin argue that we need to be much more careful about adjusting and comparing, but I think I’d go with Simpson’s point about not driving policy on the basis of ‘effect size’ at all – it just isn’t evidence of more or less effective educational interventions, it is evidence of more or less well conducted studies. This is of no use to a busy teacher, but because the EEF tout these as simple answers, they get adopted.

Pingback: Research in education is great…until you start to try and use it. — missdcoxblog |

Pingback: How can schools and universities work in partnership to raise attainment for less advantaged students? - The Brilliant Club

Pingback: Raising attainment - The Brilliant Club